HDF5 is your big training set helper

As we have discussed before here in our ongoing explanation of deep learning system, one of the key things that helped the current deep learning neural net revolution actually move forward was access to very large training sets. Could be images, could be speech samples, could be text corpus, could be what ever you are trying to learn the statistics of with your neural net.

So what is HDF5?

It's a library api designed to work with very large collections of heterogenous data. It's specificlly built around fast I/O processing. It supports n-dimensional data sets. Each element in a dataset could itself be a complex object if you want it to. It's cross platform. It's a portable format, no vender lock-in. All data and meta data can be stored in one file. The list goes on.

In some sense it's like a custom file system designed for fast access to data sets that can't fit in memory.

But wait, you say, i already have a file system. And it's pretty straightforward to use. Why do i need another one?

Rebecca Stone has a great tutorial that explains why. She also provides a good 'lets use HDF5 with Python' set of code examples to show how to interface to it. We're going to steal from her tutorial a little bit below.

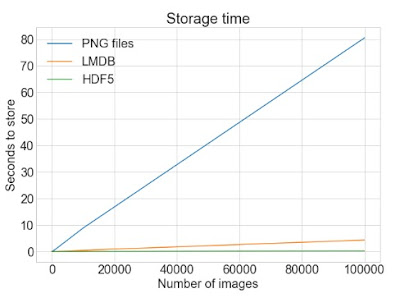

So, let's contrast the storage time for storing 100,000 images associated with a training set. Now you could certainly just write them all out to disk as PNG images. Or, you could use HDF5 and store them the way it works.

The read time graph is also very similar. Hopefully it is now obvious why HDF5 might be of interest to you. Especially if you are interested in working with Google sized training sets for your neural net.

And of course there is a nice Python interface to HDF5.

We'll be more specifically discussing how to work with HDF5 when training deep learning nets using Keras in future posts.

So what's the tradeoff you might be thinking. Surely there is no free lunch. And yes, you are trading off additional disk space for that fast access when using HDF5.

So what is HDF5?

It's a library api designed to work with very large collections of heterogenous data. It's specificlly built around fast I/O processing. It supports n-dimensional data sets. Each element in a dataset could itself be a complex object if you want it to. It's cross platform. It's a portable format, no vender lock-in. All data and meta data can be stored in one file. The list goes on.

In some sense it's like a custom file system designed for fast access to data sets that can't fit in memory.

But wait, you say, i already have a file system. And it's pretty straightforward to use. Why do i need another one?

Rebecca Stone has a great tutorial that explains why. She also provides a good 'lets use HDF5 with Python' set of code examples to show how to interface to it. We're going to steal from her tutorial a little bit below.

So, let's contrast the storage time for storing 100,000 images associated with a training set. Now you could certainly just write them all out to disk as PNG images. Or, you could use HDF5 and store them the way it works.

The read time graph is also very similar. Hopefully it is now obvious why HDF5 might be of interest to you. Especially if you are interested in working with Google sized training sets for your neural net.

And of course there is a nice Python interface to HDF5.

We'll be more specifically discussing how to work with HDF5 when training deep learning nets using Keras in future posts.

So what's the tradeoff you might be thinking. Surely there is no free lunch. And yes, you are trading off additional disk space for that fast access when using HDF5.

Comments

Post a Comment